Willkommen zu Krasse Links no 85. Imaginiert eure Pfadpsychologie, heute suchen wir die Agency der Super-Individuen heim, indem wir ihrer Imagination das BATNA nehmen.

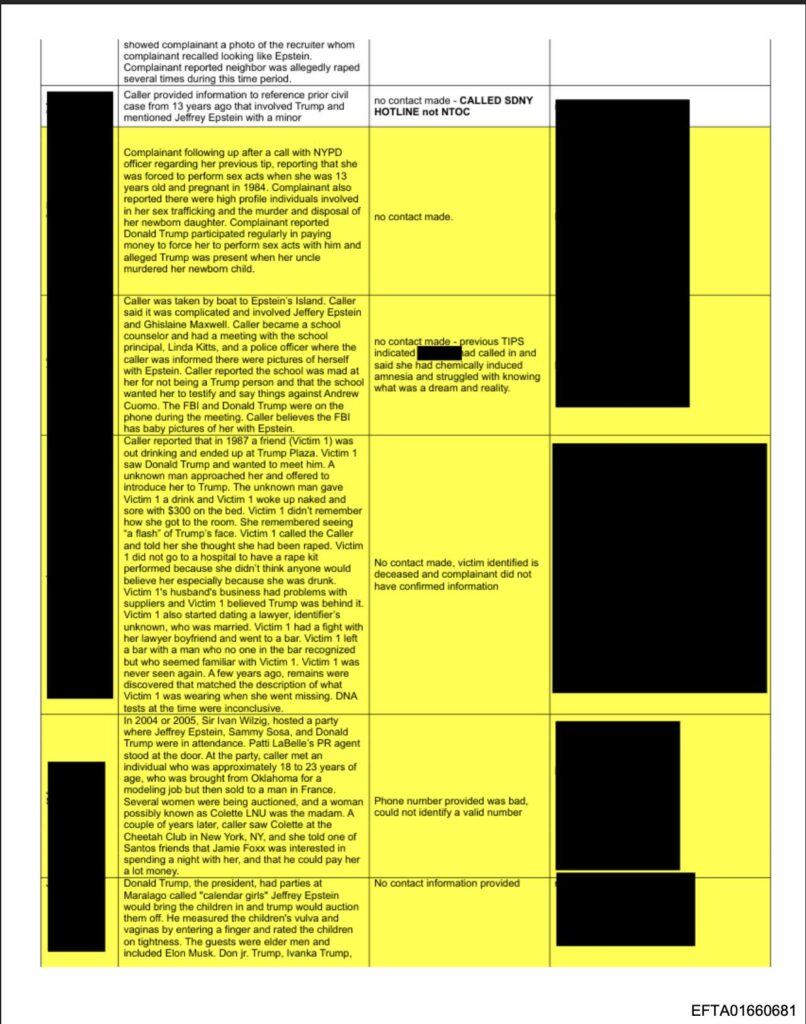

Noah Hawley berichtet im Atlantic von einem privaten Event vor ein paar Jahren, ausgerichtet von Jeff Bezos, auf dem er und seine Familie eingeladen waren. (Epstein ist nicht der einzige Milliardär, der sich die Aufmerksamkeit und Anerkennung von Menschen, die er für wichtig hält, einfach kauft) und verarbeitet ein paar interessante Beobachtungen.

The closer I’ve gotten to the world of wealth, the more I understand that being truly rich doesn’t mean amassing enough money to afford superyachts, private jets, or a million acres of land. It means that everything becomes effectively free. Any asset can be acquired but nothing can ever be lost, because for soon-to-be trillionaires, no level of loss could significantly change their global standing or personal power. For them, the word failure has ceased to mean anything.

This sense of invulnerability has deep psychological ramifications. If everything is free and nothing matters, then the world and other people exist only to be acted upon, if they are acknowledged at all. This is different from classic narcissism, in which a grandiose but fragile self-image can mask deep insecurity. What I’m talking about is a self-definition in which the individual grows to the size of the universe, and the universe vanishes. Asked recently if there is any check on his power, President Trump—himself a billionaire, and by far the richest president in American history—said, “Yeah, there is one thing. My own morality. My own mind. It’s the only thing that can stop me.” Not domestic or international law, not the will of the voters, not God or the centuries-old morality of civic and religious life.

Mein Sprechen von „Erwartungen“, „Erwartungserwartungen“, „BATNA“ und Q-Function, usw. sind alle bereits Teil eines wachsenden Instrumentariums von Etwas, das man „Pfadpsychologie“ nennen könnte.

Pfadpsychologie ist eine relational materialistische Psychologie, die, statt „in den Kopf“ zu gucken, die materiellen Verhältnisse des „Kopfes“ als Ausgangspunkt nimmt, um daraus plausible Perspektiven zu konstruieren. Eine Grundannahme dabei ist, dass unsere Wahrnehmung teil der Welt ist, in der sie existiert, also von ihren „Constraints“ „geshaped“ ist. Die Q-Funktion z.B., so wie ich sie verstehe und an ihr arbeite, versucht die Constraints des Pfadmodells als Ausgangspunkt zu nehmen, um die Funktionsweise menschlicher Pfadentscheidungen zu rekonstruieren. Q-Function ist die Infrastruktur dividueller Subjektivität.

Q-Function kann ich als Konzept aus der KI-Forschung übernehmen, denn sie steht als Ingenieurswissenschaft vor demselben Problem, vor dem auch ich stehe:

Was sind die materiellen Bedingungen des „Denkens“ und „Handelns“? Die Q-Function errechnet in der KI-Forschung den Nutzen eines Pfades im Kontext eines Spielstatus, was man gut auf das Dividuum im Pfadmodell anwenden kann.

Wenn man dann die Q-Function auf unsere komplexe Welt der (relational) materiellen Infrastrukturen mapped, dann kann man die Q-Function von hinten Aufzäumen, als Frage:

Welche Fragen stellen sich für erfolgreiche Pfad-Navigation? Und dann kommt man schnell auf die offensichtlichen Dinge: Wahrscheinlichkeit, Nutzen, Kosten, Risiko und dann, mit etwas nachdenken, auf die nur halb-offensichtlichen Sachen, wie BATNA

Wichtig: Pfadpsychologie ist nicht da, um Psychologie zu ersetzen, und auch nicht, um die „Seele“ oder den „Geist“ zu negieren – sie steht diesen Konzepten „gnostisch“ gegenüber. Was die Pfadpsychologie versucht, ist den Teil der Subjektivität zu erklären, der aus den materiellen Verhältnissen des Subjekts plausibler erklärbar ist, als mit seiner „inneren“ Subjektivität.

Jedenfalls kann man das, was Hawley hier beschreibt, pfadpsychologisch als Verkümmerung des Kostensensors der eignen Q-Function beschreiben. Reiche Menschen spüren keinen Schmerz mehr beim Bezahlen von Dingen. Die Aktivierungskosten K_F_a werden relational zu „Peanuts“ und die Verschleißkosten K_F_v werden fast immer externalisiert (Arroganz, Regulatory Capture, Ausbeutung und ein riesiges Anwalts-Heer). Das Ergebnis ist eine schwerelose Welt.

Aber die K_F_v sind ja nicht weg? Sie sind nur externalisiert, also wo anders. Super-Individuen sind politökonomisch gesehen die Endpunkte der Externalisierungsinfrastruktur des Kapitalismus und die Kosten dieser psychologischen Wirkung der Schwerelosigkeit wird von uns getragen: Über Milliarden unserer Steuergelder, über einen vermüllten Weltraum, über die ausgebeuteten Menschen, über ein kollabierendes Klima, über unseren addressatenlosen Epstein-Rage, dem KI-Wahnsinn und über das Ende der Demokratie.

Aber da ist noch etwas anderes: Gleichzeitig türmen sich mit wachsendem Wohlstand die Abschreibungskosten K_F_ab ins Unendliche, aber weil sie im jeweiligen Moment nicht materiell anfallen, sondern erst verbucht werden, wenn der aktuelle Pfad gewechselt wird, ist es leicht, sie zu verdrängen.

K_F_ab ist die „Fallhöhe“ des eigenen Fulcrums. Es sind die akkumulierten Wechselkosten, also die aufeinandergestapelten Abhängigkeiten, die sie möglich machen und ihr Marginalnutzen als F(Q)-Differenz zum BATNA-Pfad (wo man dann oft alles nochmal aufbauen muss).

K_F_ab muss man nicht rechnen, nur wissen: Je höher man steht, desto größer die potentielle Fallhöhe. Aber je nachdem, wie tief man die Abzweigung zum Pfad ansetzt, kann K_F_ab in die Höhe schnellen, weil viele Pfade auf der frühen Pfadentscheidung beruhen und von ihr abhängig sind. Ein messbarer Effekt, den wir auch „LockIn“ nennen. Du kannst jemanden überreden, seinen Job zu wechseln, aber für die Abschaffung des Kapitalismus glauben die meisten, zu viel zu verlieren zu haben.

Decades of research in developmental psychology have shown that moral reasoning develops through consequences — not punishment, necessarily, but experiencing the effects of your actions on others, receiving honest feedback, having to accommodate reality as it actually is rather than as you wish it to be. It’s not that the wealthy become evil; it’s that their environment stops teaching them the things that nonwealthy people are forced to learn simply by living in a world that pushes back. When you can buy your way out of any mistake, when you can fire anyone who disagrees with you, when your social circle consists entirely of people who need something from you, the basic mechanism by which humans learn that other people are real goes dark.

Ich spreche im Newsletter von Milliardären gerne als „Super-Individuen„. Wenn die Pathologie des Subjektentwurfs des Individuums darin besteht, sich die Infrastrukturen, von denen die eigenen Handlungen abhängig sind, selbst zuzuschreiben (der „eigenen“ Agency, „Freiheit“, „Intelligenz“), dann folgt daraus, dass das „Individuum“ als pfadpsychologische Subjektivierungsfunktion mit seinen Infrastrukturen „mitskaliert“. Diese Internalisierung der Skalierung von Pfadgelegenheiten fällt um so leichter, je reibungsloser die Infrastruktur „meinem Willen gehorcht“, und das heißt, wenn alle Reibungskosten K_F_v externalisiert werden.

Da ihre „Agency“ riesig ist und ihnen das Gefühl für die Kosten ihrer Infrastruktur verloren gegangen ist, geht das Ego der Superindividuen jeden Tag über der Welt auf, wie der Mond. Wo immer sie hintreten, „wächst“ die Infrastruktur, die sie dafür brauchen und wo immer sie fallen, konkurrieren hunderte darum, sie aufzufangen. Und selbst, wenn du persönlich da kein Bock drauf hast, bist auch du auf die eine oder andere Art ihre Externalisierungkostenstelle.

Charlie Warzel im Atlantic über das allgemeine Gefühl des Kontrollsverlusts angesichts von KI. Der ganze Text ist lesenwert und behandelt viele Phänomene, aber ich will auf eine besondere Stelle aufmerksam machen.

It’s unsurprising, perhaps, that at the same time when Silicon Valley is building and breathlessly promoting these tools—self-directed agents that can accomplish complex tasks without human supervision—many of its loudest voices have grown obsessed with the idea of their own agency. In builder circles, people deemed “high-agency” sit atop the hierarchy. They are individualistic, ambitious, focused. They just do things. They are especially adept at marshaling the use of people and machines alike. It is implied that those with high agency are, for now, insulated from becoming replaceable or irrelevant in a time of great precarity—not yet doomed to be part of “the permanent underclass,” another Bay Area coinage for the late adopters who will be left behind. How could a person hear such language and not feel at least a little paranoid?

„AI-Agents“ sind zwar ziemlich teuer, geben aber einen Vorgeschmack in die Super-Individuums-Psychologie, der mindestens für Millionäre erschwinglich wird. KI wirkt als der „Trickle down“-Effekt des Wahnsinns.

Doch dieser Wahnsinn war im Ansatz immer schon da. Er ist auch in mir, in dir, in den meisten von uns – mal mehr, mal weniger. Ich bin als Individuen erzogen worden und Trump, Musk, Epstein, Thiel sind keine grotesken Abweichungen von dieser Norm, sie sind im Grunde nur konsequent zu Ende gedachte Karikaturen unseres Subjektentwurfs.

Denn nicht nur das „Super-Individuum“, sondern auch das „Individuum“ selbst, misst man in „Agency“?

Gottfried Wilhelm Leibniz hatte, als er die bekannte Unterscheidung von „positiver“ und „negativer Freiheit“ vornahm, eine klare Definition von negativer Freiheit (die Freiheit von Zwang), aber eiert bei der „positiven Freiheit“ (die Freiheit „zu“ etwas, die ermöglichende Freiheit) vergleichsweise rum. Er hatte natürlich nicht das Pfadmodell, mit dem man sie einfach als Summe der verfügbaren Pfadgelegenheiten definieren kann, doch als „aufgeklärtes Individuum“ wäre er es wahrscheinlich eh nicht mitgegangen?

Leibniz zieht die Grenze der Agency beim menschlichen Körper und definiert „positive Freiheit“ über den Umweg der „Gesundheit“. Positive Freiheit ist bei ihm der Schwellenwert an Pfadgelegenheiten eines „gesunden Menschen“ minus „externe“ Infrastruktur, die in der individuellen Epistemologie dem Reich der „Objekte“ zugewiesen wird, also nichts mit dem Indivuduum zu tun hat.

Und nicht nur das: meine These ist, dass das „Individuum“ immer schon ein ableistischer, patriarchaler, rassistischer, kolonialsitisch-kapitalistischer Subjektentwurf war: er meinte von Anfang an immer nur einen gesunden, reichen, europäischen und weißen, heterosexuellen Cis-Mann und wurde erst – Schritt für Schritt und oft erst kürzlich – auf andere Subjekte ausgedehnt.

Abweichungen davon sind aus der Sicht des „Individuums“ „verminderte“ Individuen. Ishay Landa hatte darauf bereits hingewiesen, dass genau hier der Schnittpunkt (oder besser: Kipppunkt) zwischen Liberalismus und Faschismus ist. Das ergibt Sinn, wenn man bedenkt, dass die westlichen Erscheinungsformen von Rassismus, Misogynie, Klassismus und Sexismus alle die „individuelle Sicht auf die Welt“ zugrunde legen, nach der die Agency die Quelle des menschlichen „Werts“ ist.

Das Bewerten von Menschen nach ihrer Agency deckt diese Phänomene nicht vollständig ab, aber bildet das relational materielle Fundament all dieser Strukturen. Dein Wert als Individuum misst sich an deinem gesellschaftlich zugeschriebenen Handlungspotential Agency. (Wobei dein Handlungspotential auch dein Ver-handlungspotential bestimmt, dein BATNA).

Handlungspotential klingt abstrakt, in Wirklichkeit sind es all die gesammelten Pfade, die man selbst und/oder die Gesellschaft jemandem zutraut und der jeweils empfundene „Wert“ dieser Pfade (ihre grobe Q-Function).

Im relationalen Materialismus unterscheiden wir drei Realitätsebenen:

- Layer 1: Das Netzwerk der materiellen Infrastrukturen, ihrer tatsächlichen Fulcren und ihrer tatsächlichen Übergangswahrscheinlichkeiten. Messy, grindy, komplex und unwissbar.

- Layer 2: Das Netzwerk deiner Erwartungen. Eng verknüpft mit Layer 1 und vollgestellt mit persönlichen Erwartungsportfolios [F3]. Die Q-Function nutzt diese Erwartungen, um nach „menschlichen Ermessen“ die Pfade in die Zukunft zu bewerten. Viel komplexer als man glaubt, dennoch halb real, halb halluziniert.

- Layer 3: Das Netzwerk der erwarteten Erwartungen. Basiert auf Layer 1 und 2. Turns out: Deine Q-Function ist niemals deine, sondern alle relevanten Werte (Wahrscheinlichkeitserwartung, Nutzenerwartung, Kostenvorstellungen) wurden einmal von anderen kopiert und persönlich weiterentwickelt, bleiben aber gesellschaftlich vorstrukturiert [F7]. Ob deine erwarteten Erwartungen der anderen an dich wirklich mit der Realität matcht, ist eine andere Frage.

„Agency“ ist „Status“ im Framework des Individuums und wir können ihn nun jeweils in den drei Realitätsebenen betrachten:

- Agency(L1) ist dein topologischer Ort im Netzwerk der materiellen Fulcren. Es sind die tatsächlichen Hebel, die dir zur Verfügung stehen und die tatsächliche Stabilität deiner Pfade, die davon abhängen, minus der vollständigen Kosten.

- Agency(L2) ist der Status, den du im Leben zu haben glaubst. Dabei ist L1 für L2 durchaus begrenzend (man kann sich nicht zum Millionär wünschen, auch wenn es viele versuchen), aber weil es erstens viele andere Status-Games als die materiellen in der Gesellschaft gibt und zweitens, der eigene Status in jedem Status-Game ungewiss bleibt, gibt es hier viel Raum für Abweichung.

- Agency(L3): Die Agency, von der du glaubst, dass die anderen sie dir zuschreiben. Sie ist sowohl von L1 und L2 nicht unabhängig, kann aber auch beliebig differieren, ist aber im Normalfall orientiert an den allgemein beliehenen Erwartungsportfolios der Gesellschaft [F7] an „Leute wie dich“. In dieser Unsicherheit lebt die Quelle der „social Anxiety“.

Damit können wir das Status-Game der agentüberwachenden Superindividuen vor dem Bildschirm analysieren: Agency(L1) maß sich vor der Agents an den eigenen Pfadfindeskills innerhalb der adressierten Infrastruktur (Programmiersprachen, IDEs, Frameworks, Bibliotheken, Server, etc), was zu einer höheren, sich selbst zugeschriebenen Agency(L2) führt, je mehr Pfade davon indivudell „verfügbar“ sind, die man dann untereinander verhandelt und hofft, gegenüber dem Auftraggeber/Kapitalisten (Agency(L3)) höhere Arbeitsmarge heben zu können.

Die Agents erhöhen nun ohne Frage Agency(L1) des Programmierers, was Auswirkungen auf ihre Agency(L2) hat (Superindividuums-Syndrom) und die jetzt untereinander um möglichst produktive und effiziente Agent-Beleihung konkurrieren, um zur „permanenten Oberklasse“ zu gehören (LOL!). Doch ob sich dieses Rattenrennen wirklich auf die angenommene Agency(L3) durch den Chef auswirkt, wird gar nicht hinterfragt, weil der Tranmissionsriemen zwischen eigener Agency(L2)-Erwartung zu leveragebarer Arbeitsmarge als intakt gedacht wird.

But think about it. Natürlich wird die Rechnung nicht aufgehen, denn wenn dein Unterschied die Anzahl an Agents ist, die du leveraged (am Besten noch gemessen an den „verbrauchten Tokens“), dann ist der BATNA-Pfad zu dir aus Sicht deines Chefs, immer nur ein paar Millionen Tokens entfernt.

You lost your moat.*

* Moat = Das Delta zwischen den Aktivieerungskosten K_F_a der Pfade, die du anbietest und den Aktivierungskosten der BATNA-Pfade zu dir, K_F_a_BATNA.

Hint: Es ist immer eine schlechte Idee, das eigene Leverage (die Abhängigkeit des Anderen von dir) von einem multidimensionalen Graphen (Wissen-portfolio um unterschiedliche Lösungspfade in heterogenen aber spezifischen Umgebungen) in einen linearen Graphen (Tokens) zu verwandeln.

Tadzio Müller schreibt gerade ein neues Buch und hat in seinem lesenswerten Newsletter einen Vorgeschmack dadrauf gegeben, in dem er seine frühen Aktivistenjahre in Großbritannien reflektiert, die er unter anderem damit zugebracht hat, (erfolglos) gegen die Involvierung des Landes in die „Koalition der Willigen“ des Irakkriegs zu protestieren. Auf dem Papier sah es aus, als hätten sie eine Chance, denn eine große Mehrheit der Briten war gegen den Krieg.

große Demos und Aufsehen erregende Aktionen sollten klare gesellschaftliche Mehrheiten für oder gegen etwas produzieren, dann würde die Regierung schon einknicken, weil gesellschaftliche Mehrheiten verprellen sollte für eine demokratisch gewählte Regierung ein Problem sein.

Turns out: Man kann durchaus gegen den Willen der Mehrheit Politik machen. Nämlich dann, wenn die Mehrheit keine Möglichkeit hat, diesen Willen auszudrücken.

But is it? Die Aussage klingt erstmal nachvollziehbar, aber denkt Euch mal in das (damalige) britische Zweiparteiensystem rein: wenn es realistischerweise nur die Wahl zwischen Labour und Tories gibt, die Tories grundsätzlich für Kriege sind, und Labour diesen konkreten Krieg vorantreibt, dann ist „öffentliche Meinung verschieben“ eben gerade kein Hebel, weil es keine effektiven elektoralen Strafmöglichkeiten für den Fall gab, dass Labour in den Krieg ziehen würde. „Whatcha gonna do – vote for the effin‘ Tories, ey?“

Was Tadzio hier so klar beschreibt, könnte man auch „den zwanglosen Zwang des fehlenden BATNAs“ nennen. Die Labour-Party war gewohnheitsmäßig das BATNA zu konservativer Politik, doch Tony Blair hat diesen Pfad aus dem Sortiment genommen.

Genau deswegen wird Margaret Thatcher zugeschrieben, in Tony Blair ihr wichtigstes Vermächtnis zu sehen. „There is no Alternative“ (TINA) wurde erst mit Blair und seinem „Third Way“ der Sozialdemokratie materielle Wirklichkeit (demokratische Agency(L1)). Der Pfad zum Klassenkampf, der Pfad gegen Ausbeutung und Imperialismus wurde von „kapitalismusfreundlichen“ Kräften kooptiert und seither sind die Sozialdemokraten nicht nur in GB, sondern fast überall in Europa die „Genossen der Bosse“.

Agency, it turns out, sind nicht die Pfade die du gehen kannst, sondern die Pfade, die du ablehnen kannst. Die Fähigkeit, einen Pfad ablehnen zu können sitzt tiefer als jede positive Agency(L1), bzw. macht diese überhaupt erst zu einer „Realität“.

Meine These: Die Q-Function ist eine evolutionäre Notwendigkeit (ich habe das bereits im Pfadgelegenheits-Explainer ausgeführt), um Pfade zu evaluieren, bevor ich eine Wahl treffe. Die Q-Function erschafft die very Möglichkeit, „Nein“ zu sagen. Die Q-Function übersetzt von Agency(L1) in Agency(L2) und erschafft damit auch Agency(L0), die jede Freiheit auf den anderen Layern überhaupt erst möglich macht, aber von ihnen aber Unabhängig bleibt.

Man kann immer „Nein“ sagen, wenn man bereit ist, die K_F_ab-Kosten zu tragen.

Man kann z.B. Auswandern, oder das System stürzen. So sehr die Briten gegen den Krieg waren, diese Kosten waren den meisten zu hoch. Und das ist die eigentliche Regierungslogik: Die BATNA-Pfade bis zum Schmerzpunkt der Wechselkosten des Systems zu reduzieren.

2021 veröffentlichte die Stiftung Klimaneutralität ein Paper, dass sich die Klimaschutzmaßnahmen in den Wahlprogrammen der Parteien angeschaut hat und eines der Ergebnisse war, dass nicht mal die Grünen annähernd genug Klimaschutzmaßnahmen im Programm hatten, die das 1,5 Grad-Ziel (Zur Erinnerung: 2021 war das noch vorstellbar, bevor wir dann feststellten, dass die Temperaturen viel schneller steigen, als die Modelle vorhergesagt haben …) einzuhalten.

Pfadpsychologie bestimmt auch unsere Imagination. Seit nicht mal mehr die Grünen Klimaschutzmaßnahmen in ihren Wahlprogrammen anbieten, die genügen, um das Schlimmste zu verhindern, ist die Möglichkeit, nicht ungebremst in die Voll-Katastrophe zu rutschen, quasi aus dem Portfolio verschwunden. Der Deal mit dem Kapital ist gemacht, bevor die Wahlplakate geklebt sind. Dass dann noch die FDP, rechte Thinktanks, Springer-Kampagnen und später Katherina Reiche die wenigen Maßnahmen, die schließlich umgesetzt werden sollten, auch noch sabotierten, ist irrelevanter für die allgemeine Vorstellung für „was möglich“ ist, als diese eine Pfadentscheidung von den Grünen.

Und wenn man die Grünen selbst fragt, warum das so ist, dann sagen sie, das sei nun mal das Maximum, an „politisch Möglichem“. Dann zucken alle mit den Schultern und sagen, „na gut“, wo es dann doch darum gehen muss, wer diese „politisch Möglichkeiten“ modelliert? Wenn es nicht die Parteien sind, wer ist es dann?

Die „Öffentliche Meinung“ wird dann angeführt. Aber was ist das? Das sind die Summe der Deutungspfade der aktuellen politischen Realität und ihre jeweilige Netzwerkmacht im medialen Trommelkonzert der Öffentlichkeit.

Pfade, deren notwendige Infrastrukturen nicht in Agency(L1) existieren, können nicht imaginiert werden. Auch unsere Imagination existiert nicht im luftleeren Raum. Der Grund, warum wir fähig sind, uns „Einhörner“ vorzustellen, ist, dass wir beides kennen: Pferde und Hörner. Gemeinsam ergeben sie eine imaginäre Pfadgelegenheit zum „Einhorn“. Sience Fiction basiert auf Beobachtungen in der Realität, die in die Zukunft interpoliert werden. SciFi-Stories sind Q-Functions für aktuelle Agency(1)-Pfade, verlängert in die Zukunft.

Andersrum haben wir uns mit der Klimakatastrophe abgefunden, weil echter Klimaschutz, wie es heißt, „politisch nicht umsetzbar“ ist und seitdem denke ich, dass es an der Zeit ist, das berühmte Frederick Jameson-Zitat – „It’s easier to imagine the end of the world, than the end of capitalism“ – pfadpsychologisch zu verallgemeinern::

Alternative politische Pfade in die Zukunft werden unplausibel, wenn der materielle Zugriff auf die dafür notwendigen Infrastrukturen (Agency(L1)) mit dem Interesse am Status Quo (dem jeweiliges K_F_ab) korreliert.

Think about it: je mehr materielle Möglichkeiten du hast, desto abhängiger bist du vom aktuellen System, desto mehr wirst du alles tun, den Status quo zu erhalten. Deswegen kann echter Wandel immer nur von unten kommen. Jeder, der im aktuellen Pfad Macht hat, ist von vornherein korrumpiert und wer Macht bekommt, wird es schnell.

Das ist keine Aussage über einzelne Dividuen. Es mag Menschen mit Geld, Infrastruktur und Status geben, die trotzdem bereit sind, Widerstand zu leisten, den aktuellen Pfad zu verlassen und den Preis dafür zu bezahlen (wie immer er aussieht). Aber sie sind sehr, sehr selten.

Doch wenn man länger drüber nachdenkt, wurden wir immer schon weniger über Regierungen, als über das Design der „Choice Architectures“ unserer politischen Pfadlandschaft regiert. Was nicht auf dem Menue steht, kann nicht bestellt werden. Hinter liberalen Gaslighting-Erzählungen wie „Demokratie braucht Kompromisse“ oder „Politik ist das Bohren dicker Bretter“ steckt in Wirklichkeit der „Zwanglose Zwang des fehlenden BATNA-Pfads“, der in Formulierungen bei uns ankommt, wie „das war nun mal das Beste, was wir rausschlagen konnten.“

Wir müssen verstehen, dass unsere Pfadgelegenheiten „gemacht“ sind, nicht nur in dem Sinne, dass die aktuelle Politik „gemacht“ ist, sondern dass auch die Pfadtopologie, aus der wir sie ausgewählt haben, „gemacht“ ist. Die Pfadtopologie ist natürlich nicht von „einer Hand“ modelliert, aber von wenigen Händen und über viele Jahrzehnte mit unterschiedlichen Tools (Pateiverboten, kapitalistische Graphnahme der Medien, kapitalistische Kooptierung politischer BATNA-Pfade), aber auch über Mechanismen wie die „Schuldenbremse“, die im Grunde eine fiskalistische Default-Schranke für alle politischen Pfade ist, über die die Oligarchie (für deren Interessen sie immer durchlässig ist), den jeweiligen Regierungen ihre Pfadmöglichkeiten vorzeichnet.

Der Liberalismus hat uns eingeredet, dass die Welt über „Informationen“ gesteuert wird („Individuum“, „Markt“, „Systemtheorie“, „KI“) und dass auch die Demokratie daher ein „Wettbewerb der Ideen“ sei. Was ein Bullshit. Uns den Klassengegensatz auszureden war der Powermove des Kapitals.

Dabei erleben wir es jeden Tag: unsere Q-Function ist der des Kapitalisten entgegengesetzt. Sein Vorteil ist unser Nachteil. Nochmal: nicht immer in allen Details, aber in allen Entscheidenen Fragen von Macht, Ressourcen, Politik, Zukunft und Agency und um das zu verschleiern, hat der Liberalismus den „Markt der Ideen“ ersonnen, in dem man sich innerhalb der Matrix der politischen Pfadvorstellung der Kapitalisten positionieren darf.

Und das ist der Punkt: die aktuelle Agency(L3), die „Individuelle Freiheit“, die man uns zugesteht, ist nichts wert. Du kannst alle Pfade der Welt zur Verfügung haben. Aber wenn deine Agency(L1) dir keinen Pfad anbietet, der die Katastrophe abwendet, auf die du zusteuerst, dann bist du nicht frei.

Wenn wir das verhindern wollen, müssen wir eine Q-Function finden, die das Ausüben von kollektiver Agency(0) wieder plausibel macht.

Das wichtigste Panel der diesjährigen re:publica war nicht über Technologie, Internet oder KI, sondern über Klasse. Geraldine de Bastion moderierte Hanno Sauer und Mareice Kaiser, die beide ein Buch über Klasse geschrieben haben und das ganze Panel erklärte in seiner Inszenierung mehr über Klasse, als jedes Buch es könnte. Es wurde viel über den offnen Vergleich der Vorschüsse berichtet, aber das war nur der Beginn eines von vorn bis hinten lehrreichen Panels.

Ich habe das Panel zusammen mit Ali bereits im letzten Krasse Links-Podcast besprochen, deswegen werde ich hier nur ganz kurz zusammenfassen:

Man kann auf der Agency(L1) Ebene die unterschiedlichen materiellen Startbedingungen der Sprecher*innen berücksichtigen und sieht wie sie die Imagination der Dividuen Agency(L2) bestimmen: Hanno Sauer wuchs in einem Professorenhaushalt auf und hatte immer den Traum ein Buch zu schreiben, während das für Mareice Kaiser als Arbeiterkind überhaupt nicht im Plausibilitätsraum existierte bevor sie durch Bildung und sozialen und medialen Aufstieg an einen Punkt kam, an dem das für sie ein plausibler Einkommenspfad wurde, wie sie berichtete.

Dafür ist natürlich auch BATNA entscheidend: der Buchmarkt ist – zumindest für alle Einsteiger*innen (Berit Glanz hat in diesem Artikel den Buchmarkt für Außenstehende gut beschrieben) und alle, die keine serienweisen Superbestseller schreiben ein prekäre Angelegenheit. Buchschreiben ist ein Pfad, der immer ein BATNA braucht, eine Rückfalloption, wenn der Vorschuß nicht reicht (meistens) und der Erfolg ist niemals garantiert. Trotz der 160.000 Euro Vorschuss, würde auch ein Hanno Sauer ohne BATNA-Pfad keine Bücher schreiben und das Buch für weniger zu schreiben, würde vermutlich sein BATNA ihm verbieten. Wir erinnern: Agency(L1) bestimmt nicht nur deine Handlungsmacht, sondern auch die Verhandlungsmacht. Der Pfad zum Buch steht strukturell nur für Privilegierte offen und die großen Vorschüsse nur denen, die sie nicht nötig haben.

Und dieser Abstand wird noch mal spürbarer, als Mareice Kaiser Agnieszka Jastrzebska auf die Bühne bittet. Jastrzebska arbeitet bei einem Zulieferer der Berliner Charité und kämpft literally ums Überleben. Der Lohn reicht dafür nämlich nicht, weswegen sie mit ihren Kolleg*innen in den Streik gegangen sind. Niemand käme überhaupt auf die Idee, sie zu fragen, ob sie davon träumt, ein Buch zu schreiben.

Als Agnieszka Jastrzebska auf die Bühne kam, brach die ganze Liberale Welt zusammen. Die Perspektiven zwischen Hanno Sauer und Mareicke Kaiser schienen bereits kaum vermittelbar, doch erst der Auftritt von Jastrzebska machte klar, dass sie irreduzibel sind. Keine der drei Perspektiven ließ sich auf die andere reduzieren. Es saßen drei völlig unterschiedliche Agency(L1, L2, L3) Positionen nebeneinander mit drei sich völlig unterschiedlich strukturierenden Perspektiven.

Und das ließ wiederum die re:publica selbst kollabieren, denn es wurde klar, dass das Spektrum der hier gezeigten Perspektiven bislang unter der Agency(L3) von Mareicke Kaiser abgeschnitten war. Hier tauschen sich normalerweise vor allem BATNA-satte Medienmenschen aus, die durch den von Jastrzebska auf die Bühne gebrachten Überschuß an Realität (Repräsentativ für Agency-Unterschiede im Land) aus ihrer Komfortzone gerissen wurden.

Agnieszka Jastrzebska wurde vom Saal gefeiert, was schön war zu sehen. Aber das ist natürlich nicht ihre Realität. Wenn sie sich mit ihren Kolleg*innen durch die Räume der Charité arbeitet, wird sie von dem meisten nicht wahrgenommen und viele, die sie beklatschten, hätten sie in jeder anderen Situation kaum bemerkt – und ich nehme mich dabei nicht aus.

Doch ich auch kann den Impuls, Jastrzebska zu feiern, absolut nachvollziehen. Von allen dreien hat sie auf dem Panel persönlich am wenigsten zu gewinnen, und gleichzeitig durch die Exponierung am meisten zu verlieren. Ihr Kampf ist kein Kampf um Status (Agency(L3)), sondern ums Überleben (Agency(L1)). Aber sie führt ihrem Kampf implizit auch um eine Form von Agency, die uns alle betrifft, die aber dem satten Bürgertum fremd geworden ist: Sie kämpft für die Möglichkeit, „Nein“ zu sagen – Agency(L0).

Wir alle sind mit Klassismus, Rassismus und Sexismus „infiziert“, auf die eine oder andere Weise, mal mehr mal weniger, aber vor allem sind wir infiziert mit dem „Individuum“, dass all diesen „ismen“ relational materialistisch unterlegt ist und sie „groundet“. Aus der „individuellen“ Sicht eines Mannes, hat eine Frau meist weniger Agency, aus Sicht eines Weißen, hat ein Schwarzer oft weniger Agency, aus Sicht eines Nicht-Behinderten, hat ein Behinderter oft weniger Agency, und ein Armer hat aus Sicht eines Nichtarmen oft weniger Agency, usw.

Intersektionalität ist in der Realität immer komplex, aber weil der Subjektentwurf des Individuums sowieso nach Agency(L3) diskriminiert und sie in allen diesen Diskriminierungsformen eine Rolle spielt, wird Frauen, Armen, Behinderten und bestimmten rassifizierten Menschen per Default eine „verminderte Agency“ zugeschrieben – und manchmal wird die Verminderung durch Status-Polizei auch aktiv hergestellt.

Und dazu kommt, dass das, was gesellschaftlich als Agency(L3) zählt, meist aus der Gruppe der Nichtbetroffenen heraus definiert wird: Agency(L1)-Pfade, die diese Gruppe nicht kennt (nicht in Agency(L2)), haben als Agency(L3) also keinen „Wert“. Wenn deine Zweitsprache Englisch ist, zählt das, wenn sie Türkisch ist, not so much. Wenn du Blindenschrift lesen und dich durch dein Gehör im Raum orientieren kannst, dann ist das zwar deine Agency(L1, L2), wird aber auf Agency(L3) selten gezählt, usw.

Aber das gilt nicht für die Agency-Pfade, die der privilegierten Gruppe bekannt sind: eine gute Bildung, ein guter Job, viel Geld oder Macht, können der gruppenbezogenen Agency(L3)-Abwertung entgegenarbeiten. Im Liberalismus/Kapitalismus können von diesen Diskriminierungsformen Betroffene durch das Erlangen von anerkannter Agency(L3) aus manchen der Abwertungsvorstellungen und manchen Diskriminierungs-Vektoren von Rassismus, Klassismus, Ableismus und Sexismus ein Stück weit entkommen.

Beispiel Rassismus. Rassismus ist kein fixes Phänomen, es ist ständig im Flux und verhandelt seine Normen, Richtungen, Grenzen ständig neu. Italiener, Iren und Juden galten im frühen Amerika nicht als „weiß“, mit dem ökonomischen Aufstieg änderte sich das. Es gibt eine Korrelation zwischen Restaurantpreisen ethnischer Küchen und dem wirtschaftlichen Erfolg des Heimatlandes. Wenn ein Land wirtschaftlich erfolgreich wird, werden nicht nur die Menschen, die dort herkommen anders gesehen, auch die Produkte von dort erscheinen wertvoller. Chinesisch war lange der Inbegriff des Billigessen, jetzt sind die teuersten Restaurants chinesisch.

Der Feminismus hat nicht unbedingt zu Gleichberechtigung geführt, aber Pfade eingerichtet, der (manchen) Frauen einen Agency(L1)-Aufstieg ermöglicht und im Zuge dessen die Zuschreibung „Frau“ in vielen Kontexten gesellschaftlich aufgewertet (Agency(L3)).

Die Ausweitung des Individuums-Status auf die anderen Gruppen (vor allem in den letzten 50 Jahren) hat dazu geführt, dass heutzutage auch Frauen, Behinderten, Queeren und Schwarzen „erlaubt“ wird, erfolgreich zu sein und das führt dazu, dass der als Ausdruck von Agency gelesene Erfolg das „verminderte Individuum“ aufwertet, zum „almost Individuum“. „Almost“, weil trotzdem weiter andere Regeln gelten (höhere Erwartungen an Kompetenz und Integrität, Glasdecke, etc).

Aber natürlich sind die Karrieren von Frauen und Schwarzen genauso wenig wie bei den Weißen vom Himmel gefallen, auch sie wurden ermöglicht durch Ressourcen, oft durch die Familie und den Umkreis, durch die Schule, die Lehrer*innen und Zufälle, die die notwendigen Infrastrukturen – oft über Generationen akkumuliert – für diese Karrieren geliefert haben. All das wird still und heimlich in das „Individuum“ eingepreist. Weil du deine Agency bist, bist du auch deine Infrastrukturen.

Rassismus oder Sexismus verschwindet mit dem ökonomischen Aufstieg von Individuen oder Gruppen in der Bürgerlichen Gesellschaft nicht, aber er verändert sich deutlich und sichtbar. Der Aufstieg verschiebt Grenzen, die vorher enger gezogen wurden, er räumt Erlaubnisse ein, die vorher verwehrt waren, eröffnet Pfade, die vorher verschlossen waren. Kurz: es wird dem „almost Individuum“ mehr „Individualität“ eingeräumt und zugestanden. Aber der Status des „almost Individuum“ bleibt fragil, im Konfliktfall wird er so schnell wieder entzogen, wie ein Pass bei Israelkritik.

In Großbritannien wird laut Cheshire West – wie in Deutschland – die Agency(L1) für behinderte Menschen einschränken, allerdings durch eine Entscheidung des Supreme Courts direkt auf Ebene der Grundrechte.

The ruling effectively dismantles a landmark 2014 legal framework known as Cheshire West, which established a universal “acid test”. This means that if someone lacks the mental capacity to consent to their care and living arrangements, is under continuous supervision and control, and is not free to leave, they were legally ‘deprived of their liberty’. This triggered vital legal safeguards (DoLS), requiring an independent assessor to regularly inspect care homes, supported living arrangements, and locked units to ensure the placement is safe, justified, and in the person’s best interests. Today’s decision tears up those protections.

Das ganze wird damit begründet, dass manche der Behinderten sowieso „nur eingeschränkte“ Agency(L2) hätten, also auch nichts zu verlieren hätten.

The Court implies that individuals with profound cognitive disabilities cannot be „deprived“ of liberty because their condition limits their ability to experience it — a view that devalues their fundamental rights.

Von Verteidigern des „Liberalismus“ höre ich immer den Einwand, dass Liberalismus ja auch immer die „Achtung vor der Freiheit des Anderen“ impliziere.

Ok, fine. Aber was heißt denn die „Freiheit des Anderen achten“?

Es heißt, nicht in seine Pfade zu intervenieren, bleibt also als „negative Freiheit“ eine Funktion der Agency(L1). Aber die ist extrem ungleich verteilt? Das heißt nicht nur, dass jemand mit viel Agency(L1) auch viel mehr Gelegenheiten hat, auf seine „negative Freiheit“ zu pochen, es heißt auch, dass unsere Q-Function zur Anerkennung der Freiheit des Anderen, Agency(L3), implizit entlang seiner Infrastrukturen liest.

Die dividuelle Sichtweise legt einen anderen Anerkennungsmodus nahe: Ein Anerkennungsmodus, der zwar die Freiheit der Agency(L1) mit einbezieht (wenn auch nicht so absolut, wie der Liberalismus), aber die Grenze der zugeschriebenen „Subjektivität“ nicht auf Agency(L2) ansiedelt (die ja von außen eh nicht sichtbar ist). sondern, sie bei bei Agency(L0) verortet: Der Fähigkeit „Nein“ zu sagen.

Wir müssen uns wieder gegenseitig als „widerständige Wesen“ sehen, anerkennen und schätzen lernen. Wesen, die ein Recht darauf haben, „Nein“ zu sagen – möglichst in jeder Situation und bei möglichst überschaubaren K_F_ab.

Ein anderer, spannender Beitrag bei der re:publica war der zur „Bürgerlichen Zerstörungslust“ von Carolin Amlinger und Oliver Nachtwey.

Ich will den Vortrag hier nicht zusammenfassen, aber was mir beim Schauen sofort auffiel: es gibt einen pfadpsychologischen Zusammenhang zwischen Corona-Leugnern, Road Rage, den politischen Effekten von Inflation und Faschismus: Individuen reagieren oft aggressiv, wenn ihnen bereits erwartete Pfadgelegenheiten genommen werden.

Weil Individuen gewohnt sind, ihre Pfadgelegenheiten sich selbst und ihrer „eigenen“ Agency(L2) zuzurechnen, wirkt ein Verlust erwarteter Pfadgelegenheiten wie ein Angriff auf sie persönlich. Der „Lockdown“ reduzierte die Pfade enorm, ein vor dir einscherender langsamer Radfahrer macht, dass du in die Bremsen gehen musst, die Pfade, die du für Geld gehen kannst, werden bei Inflation kürzer. Die Reaktion bei manchen – nicht bei allen – darauf ist Wut. Es ist die Wut des „sich in seiner Automomie eingeschränkt fühlens.“

Ich bin überzeugt: Road Rage ist die Grundemotion des Faschismus.

Diedrich Diederichsen hat den Zusammenhang von Road Rage und Faschismus bereits vor Jahren gut getroffen:

Das, was man in Amerika »Road Rage« nennt – von Drängeln bis Drive-by-Shooting –, ist die lebensweltliche Alltagsvariante der Wut der Bürger und Kleinbürger.

Im Straßenverkehr kann man es auch am ehesten an sich selbst beobachten. Ich verwandle mich etwa regelmäßig in einen Fahrrad-Nazi, was ich an guten Tagen amüsiert an mir selbst beobachte, an schlechten als erschreckende Verwandlung erlebe. Im Verhältnis zu meinen Mitmenschen interessiert mich dann plötzlich nicht mehr, dass und wie wir es miteinander aushalten, sondern dass sie meine verbrieften Rechte (als Radfahrer) verletzen oder verletzen könnten. Sie erscheinen mir nicht in ihrer realen Rolle als ein diffuses Bündel von Absichten, die natürlich nicht mich meinen, sondern durch die paranoide Perspektive des Rechteinhabers nur als Rechtebeschneider: verschnarchte Fußgänger, die den Radweg blockieren, linksabbiegende Autofahrerarschgesichter, die mir die Vorfahrt nehmen; die lahme Muddi, die nicht zur Seite springt, der fiese Vaddi, der mich zu Fall bringen will. Meine Rechte erscheinen mir als Radfahrer nicht wirklich garantiert, weil ich um meine geringe reale Macht gegenüber dem Autofahrer weiß, sobald wir gemeinsam einen rechtsfreien Raum beträten, und auch um meine potentiell größere Macht als die des Fußgängers. Da ich an der Garantierbarkeit meiner Rechte angesichts realer, in diesem Falle physischer Machtverhältnisse zweifele, klammere ich mich aggressiv an sie. Sie sind alles, was ich habe, ich klage sie ein, auch dort, wo sie niemand verletzen wollte oder jemand sie verletzt, ohne mir zu schaden.

Road Rage ist pfadpsychologisch naheliegend, aber eng an die Subjektivierung des Individuums gekoppelt. Denn das, was Diedrichsen hier als „Recht“ deklariert ist nur das „Entitlement“ des Phantombesitzes (Eva von Redecker) von Pfaden. Im Road Rage glaubne ich ein „Recht auf den Pfad“ zu haben („Die Straße gehört mir!“), so wie meine Q-Function ihn mir versprochen hat. Der Pfad war bereits ein verbuchtes Asset [F3] und jetzt muss ich ihn (zumindest zum Teil) abschreiben [F9].

DAS MACHT WÜTEND!!

Der Road Rage kann sich über Zeit auch zu einem „Road Grudge“ auswachsen, nämlich, wenn eine Abschreibung auf einen Pfad in der Vergangenheit zu einer Erzählung des „Blockierten Lebens“ führt, wie Carolin Amlinger im Vortag ausführt. Man weint dem unrealitisiertem Marginalnutzen des abgebrochenen Pfads nach. Oder Anders: Beim „Road Grudge“ wird man von den uneingelösten Opportunitätsdividenden des „ungelebten Lebens“ (Erich Fromm) heimgesucht (siehe auch Mark Fischers Hauntology).

F(Q_Geisterpfad) ist der imaginierte Wert des abgebrochenen Pfads. Was wäre, wenn X mich geheiratet hätte, wenn ich 1999 in Apple-Aktien investiert hätte, wenn mein Chef mich nicht gefeuert hätte, wenn mich meine Frau nicht verlassen hätte, wenn ich bei dem Unfall besser aufgepasst hätte, wenn diese Uni mich genommen hätte, wenn X noch lebte und wenn Y nicht passiert wäre, usw.

Hauntology = F(Q_Geisterpfad) – F(Q_AktuellerPfad)

Und ab Hauntology > K_F_ab wirds gefährlich.

Here is the thing: Wir alle werden jeden tag irgendwo blockiert, manche von uns erlauben sich ab und zu „Road Rage“, die meisten tragen Verletzungen mit sich rum und viele von uns werden von Geistern ungegangener Pfade heimgesucht. Das macht uns nicht zum Nazi.

Doch Geister sind blöderweise unsichtbar, weswegen es leicht ist, Sündenbockerzählungen für diese Wut aufzugleisen, Erzählungen, die die Wut „harvestet“, um sie politisch auszurichten.

Der Hass von MAGA auf DEI und der Hass der Kapitalisten gegen „Regulierung“, der Hass des verlassenen Ehemanns auf „den Feminismus“, Musks Rage gegen den „Woken Mindvirus“, die „Dolchstoßlegende“ nach dem ersten Weltkrieg, die Erzählung, dass „die Juden alles kontrollieren“, Verschwörungstheorien im Allgemeinen und die Kettensäge, die alles wieder gut macht.

All das sind Kanalisationsanlagen der Rechten für die Road Rage-Ströme blockierter Leben.

Doch der aktuelle Road Rage hat auch einen „wahren“ Kern: Es ist die durch den TINA-Pfad unterdrückte Agency(L0), die sich ihren Weg durch die Ordnung bahnt.